How Solve Intelligence Handles Invention Disclosures and Unstructured Data

If you've been drafting patents for any length of time, you know the real bottleneck is often not the drafting itself. It's the messy inputs that precede it: partial forms, internal review decks, or email threads where the inventive aspects are buried. Getting from that to a coherent starting point for a draft consumes time most practices simply can't afford.

AI can perform much of that translation work: extracting what matters, flagging what's missing, and generating the necessary follow-up questions based on holes and shortcomings. But it must operate inside proper confidentiality controls, and its output requires attorney review before going near a draft. This guide covers how that works in practice in Solve Intelligence's platform .

Key takeaways

- The disclosure bottleneck is upstream; AI structures messy inputs before the drafting phase begins.

- AI extracts features, normalises terminology, surfaces gaps, and generates inventor questions, but attorney review is mandatory.

- The danger is plausible but fabricated detail, not obvious errors. Watch for AI-generated parameters or 'helpful' specifics.

- Disclosures contain trade secrets and unpublished IP. Use only tools with verified zero-training, zero-retention policies and enterprise-grade security.

- A sensible pilot, without client approval, uses anonymised or historical disclosures to define 'good' output and track key metrics over limited timeframe.

The disclosure problem every patent practitioner recognises

Inventors don't think about their work the way a patent attorney needs to read it. That's not a criticism. It's just reality. An inventor's job is to solve a technical problem, not to document it in a way that maps cleanly onto a claim structure. So what you typically receive isn't a structured disclosure. It's a collection of artefacts from the process of inventing:

• A presentation built for a product review, with key technical details on a single slide

• Lab notebooks that capture what was tested but not why certain approaches were abandoned

• Email chains where the inventive step is explained clearly in one message and then contradicted in the next

• Partial disclosure forms where half the fields are blank or answered with 'see attached'

• Diagrams and screenshots that show how something works but don't describe it

• Audio and video recordings from inventor interviews (MP3, MP4, M4A) that need transcription and structuring

Each of these is a legitimate source of information. The problem is converting them into an official reference document that you can actually draft from. Which ultimately enables you to align inconsistencies across sources where 'the device,' 'Module B,' and 'the widget' might all refer to the same thing.

Why it creates a problem downstream

Alternative approaches get mentioned once and then dropped. Parameters sit in a diagram rather than written text. Embodiments go undescribed because the inventor assumed they were obvious.

The downstream cost is real. Missed alternatives mean narrower fallback positions when claims are challenged. Inconsistent terminology complicates prosecution. And every gap that slips through at intake becomes either a clarification cycle later, or a missing support problem you're dealing with under time pressure.

Structured intake addresses those risks before they become drafting problems.

What AI actually during invention disclosure intake

'AI-assisted intake' can mean a lot of things. In practice, the useful functions can be specific, and it's worth being clear about what each one does because they carry different review requirements.

It reads across all your sources at once

Rather than working through a presentation, then a set of emails, then a transcript separately, AI is capable of synthesizing across all inputs simultaneously and producing a consolidated summary: what the invention is, how it works, and what's claimed to be new. The AI considers all details, no matter where they appear.

It extracts the technical substance

Components, method steps, parameters, operating conditions, material properties, variants. AI pulls these out of narrative text and gives you a structured view of what's actually in the disclosure and what's worth claiming. That extraction is the foundation for everything that follows.

It produces an application-ready skeleton

From the extracted content, AI builds a structured outline: the technical problem being solved, the proposed solution, key embodiments, variations, and potential advantages. This isn't a draft. It's an organised input that an attorney can review, annotate, and build from. The difference between starting from a blank page and starting from a confirmed skeleton is significant, especially on complex technical disclosures.

It tells you what's missing

Gap identification is one of the more under-appreciated functions. AI flags undescribed steps, missing parameters, embodiments that appear referenced but never explained, and functional language without a described mechanism. This is particularly useful before an inventor interview. Knowing exactly what's absent from a disclosure means the conversation is focused rather than exploratory.

It generates questions you can send to the inventor

Based on the gaps it identifies, AI produces specific questions that can go directly to the inventor by email or form the basis of a follow-up interview. Not a generic 'please add more detail' checklist, but targeted questions grounded in the actual disclosure:

'Figure 3 references a threshold value but doesn't specify it. Can you confirm the range and whether it's essential or a preferred embodiment?'

That kind of specificity makes inventor conversations productive in a way that general prompts rarely do.

How the AI-assisted intake workflow runs in practice

The sequence matters more than the number of steps. The single most common failure point is skipping confirmation with the inventor when timelines get tight. That's where errors compound.

Collect everything before processing: Disclosure form, attachments, relevant emails, diagrams, any prior art the inventor has flagged. What isn't captured here, AI can't read.

Triage first. Confirm which tool is approved for the sensitivity level of what you have. Invention disclosures aren't appropriate for general-purpose AI tools. See the confidentiality section for what to check.

Structuring pass. AI produces a consolidated invention summary, a terminology table, and an organised view of the extracted features. The attorney reviews each before anything moves forward.

Gap pass. AI produces a gap checklist, an assumptions log (places where it has inferred rather than extracted from source material), and targeted questions that can go directly to the inventor or structure the next interview. Nothing on the assumptions log is confirmed until the inventor says so.

Confirmation. Attorney and inventor go through the summary, extracted features, and assumptions log together. This is the step that converts AI output into verified content, and where multiple rounds of email follow-up typically get compressed into one focused conversation.

Where it works, where it doesn't, and what to check

What AI handles well

Cross-source synthesis is where AI genuinely earns its place in this workflow. Synthesizing a coherent, structured summary from disparate, unstructured inventor submissions is a mechanical, time-consuming process that AI can handle. It doesn't fatigue on slide 14, and it doesn't miss details mentioned once in a footnote.

Terminology normalisation is similarly reliable. When an inventor has used five different terms across four documents to describe the same component, AI maps them consistently. That consistency matters downstream: claim drafting gets harder and prosecution positions get weakened when terminology hasn't been supported before you start.

Gap identification can change the quality of inventor conversations too. Coming into an interview knowing specifically what's absent from a disclosure, rather than just that it feels incomplete, means you can ask the right questions rather than exploring broadly and hoping something surfaces.

What to watch for

Typically, what causes real problems are not the obvious errors. It's output that reads as complete and well-supported but contains details that aren't actually in the disclosure.

AI may fill gaps with technically plausible specifics: a threshold that seems reasonable given the application, a material property that fits the context, a range that aligns with common practice in the field. None of that is supported by the disclosure. If it makes it into a claim, you have an enablement problem.

A few specific patterns worth checking every time:

• Invented aspects. Any parameter, threshold, range, or performance claim that doesn't appear in the source inputs. Flag it; don't use it until the inventor has confirmed it.

• Collapsed alternatives. AI may merge competing approaches or variant embodiments into a single description. Check that distinct alternatives are preserved as distinct rather than averaged into a single preferred embodiment that happens to be cleaner to summarise.

• Examples treated as limitations. If AI positions a specific example as a defining feature rather than an illustration, your independent claim construction and coverage can be hindered. Check that examples are framed as examples vs. requirements and essential components.

• Inconsistent terminology. AI may normalise terms in the summary but revert to inconsistent language in the specification. The terminology table needs to be enforced across all outputs, not just applied once at the start.

Minimum checks before drafting from AI outputs

• Every key feature in the structured summary traces back to a source input or confirmed inventor statement

• No parameters, thresholds, or performance claims appear that aren't supported by the disclosure

• Alternatives and fallback positions are listed as distinct, not merged into a preferred embodiment

• Examples are framed as illustrations, not as required limitations

• Terminology is consistent across summary, spec outline, and claims

Confidentiality and governance

Invention disclosures occupy a specific risk category in patent work. They contain non-public technical information and trade secrets. In a first-to-file system, a confidentiality failure isn't just a professional conduct issue. It can directly affect patentability. That requires deliberate governance rather than ad hoc decisions at the matter level.

The practical question isn't whether to use AI on disclosures. It's which tool, in which environment, with which controls. A few principles that should be in place before any disclosure gets processed:

Click here to read our full article on how to run due diligence checklist on AI tools for patent practice.

Where Solve Intelligence fits

Messy disclosures are note an edge case. They're the norm. Inventors communicate in the formats that fit their work in research and development processes or in academia, and translating those formats into something practitioners can draft from is time-consuming, error-prone, and easy to underestimate as a source of downstream problems.

AI-assisted intake does note entirely solve the underlying challenge. But it compresses the translation work significantly: enhanced disclosures for drafting, fewer clarification cycles, and gaps caught before they become problems during prosecution.

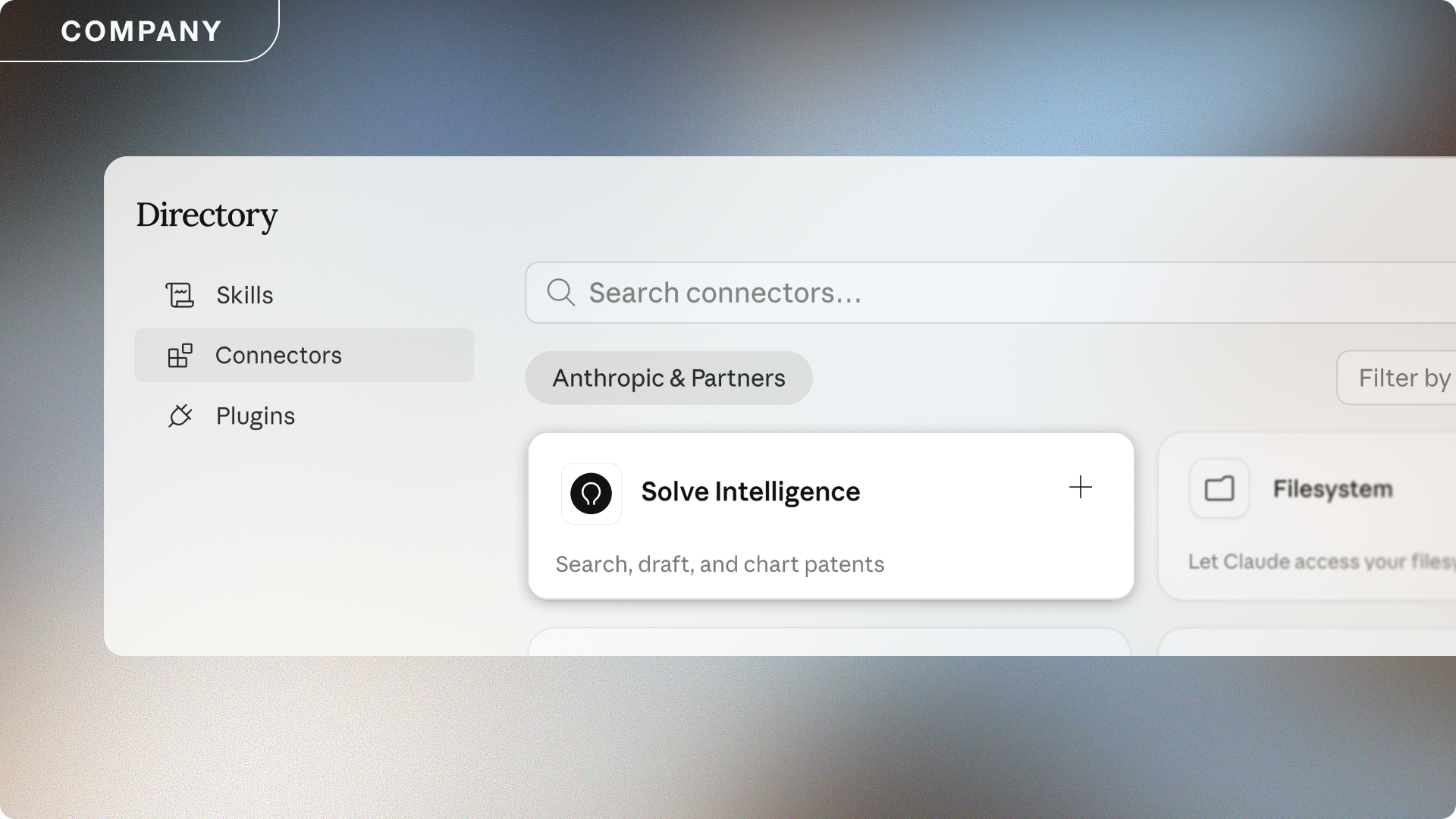

Solve Intelligence is built for patent workflows, not adapted from broader legal AI and not a general document tool with patent-specific prompting added on top. The intake work described in this guide is one of the core problems the platform addresses: taking fragmented, inconsistent disclosure inputs and producing structured drafting artefacts that are easier to review, easier to build from, and appropriate for the confidentiality requirements of patent practice.

The platform is used by 500+ IP teams globally, including DLA Piper, Siemens, Finnegan, and Perkins Coie. Confidentiality controls are built into the architecture: no training use of customer inputs or outputs, sandboxed customer environments, and project separation to reduce cross-matter risk. For teams running formal security reviews, the Solve Trust Centre provides full documentation.

What to find out more? Don’t wait, book a demo today at solveintelligence.com or contact partnerships@solveintelligence.com.

Frequently asked questions

What does it mean for AI to 'structure' an invention disclosure?

Converting fragmented, multi-format inventor inputs into an organised summary a practitioner can actually work from. That means extracting technical features, normalising inconsistent terminology, producing a patent-ready outline, and flagging what's missing. It's input preparation, not claim drafting.

Can AI turn emails, notes, and slides into a draft-ready starting point?

Yes, with the right workflow and review in place. AI can synthesise across multiple source formats and produce a structured summary, an organised view of extracted features, a gap checklist, and a spec outline. Everything still needs attorney review and inventor confirmation before it touches a draft, but it significantly reduces the gap between raw inventor material and something you can build from.

How can Solve Intelligence help teams structure invention disclosures and start drafting faster?

Solve Intelligence is built for patent workflows specifically. For disclosure intake, the platform handles feature extraction, terminology normalisation, gap identification, and targeted inventor question generation, in an environment designed for the confidentiality requirements of patent practice. No training use of customer data, sandboxed environments, and project separation to reduce cross-matter risk.

To discuss a governed pilot, book a demo at solveintelligence.com or contact partnerships@solveintelligence.com.

AI for patents.

Be 50%+ more productive. Join thousands of legal professionals around the World using Solve’s Patent Copilot™ for drafting, prosecution, invention harvesting, and more.

.png)