USPTO Memo: Reminders for AI & Software Inventions

On August 4, 2025, the USPTO issued a memorandum to software-related art units about subject matter eligibility for AI and computer-implemented claims under 35 U.S.C. § 101. The memo emphasizes that it "is not intended to announce any new USPTO practice or procedure and is meant to be consistent with existing USPTO guidance".

This memo builds on and refers to previous July 2024 AI guidance. That guidance provided new examples and analytical frameworks for examining AI inventions. This memo attempts to ensure those frameworks are consistently applied across art units.

The memo addresses four areas where examiners often lack consistency when applying the Alice/Mayo steps: mental process analysis, distinguishing claims that recite versus involve judicial exceptions, analyzing claims as a whole, and determining when rejections are appropriate.

Key Reminders from the Memo

Mental Process Limitations

For Step 2A Prong One, the memo reminds examiners that courts consider a mental process as one that "can be performed in the human mind, or by a human using a pen and paper." But examiners are warned not to expand this grouping to cover limitations that cannot practically be performed in the human mind.

"claim limitations that encompass AI in a way that cannot be practically performed in the human mind do not fall within this grouping"

The memo specifically states that "claim limitations that encompass AI in a way that cannot be practically performed in the human mind do not fall within this grouping," perhaps attempting to address a tendency to broadly apply the mental process category to AI-related claims.

"Recites" vs. "Involves"

The memo notes that “examiners should be careful to distinguish claims that recite an exception (which require further eligibility analysis) from claims that merely involve an exception (which are eligible and do not require further eligibility analysis)”.

The memo uses Examples 39 and 47 to illustrate this difference:

- Example 39's limitation "training the neural network in a first stage using the first training set" does not recite a judicial exception. Even though training involves mathematical concepts, the limitation doesn't "set forth or describe any mathematical relationships, calculations, formulas, or equations using words or mathematical symbols."

- Example 47's limitation requiring "backpropagation algorithm and a gradient descent algorithm" recites a judicial exception because it "requires specific mathematical calculations by referring to the mathematical calculations by name."

Evaluating Claims as a Whole

At Step 2A Prong Two, examiners evaluate whether claims integrate judicial exceptions into practical applications. The memo emphasizes that additional limitations should not be evaluated "in a vacuum, completely separate from the recited judicial exception."

Instead, the analysis should consider "all the claim limitations and how these limitations interact and impact each other when evaluating whether the exception is integrated into a practical application."

Improvements Consideration

Examiners can find claims eligible at Step 2A Prong Two if they "reflect an improvement to the functioning of a computer or to another technology or technical field." The memo reminds examiners to consider “the extent to which the claim covers a particular solution to a problem or a particular way to achieve a desired outcome, as opposed to merely claiming the idea of a solution or outcome”.

"the specification does not need to explicitly set forth the improvement, but it must describe the invention such that the improvement would be apparent to one of ordinary skill in the art."

The memo also reminds examiners to consult the specification to determine whether the invention includes an improvement, but importantly, "the specification does not need to explicitly set forth the improvement, but it must describe the invention such that the improvement would be apparent to one of ordinary skill in the art."

"Apply It" Consideration

The "apply it" consideration evaluates whether additional claim elements do more than simply instruct someone to implement an abstract idea on generic technology. The memo notes this consideration often overlaps with the improvement analysis.

The memo reminds examiners not to "oversimplify claim limitations and expand the application of the 'apply it' consideration". Claims that improve computer capabilities or existing technology may integrate a judicial exception into a practical application, whereas claims that merely use computers as tools to perform existing processes typically fail this test.

When to Make Rejections

"examiners should only make a rejection when it is more likely than not (i.e., more than 50%) that the claim is ineligible."

For close calls, the standard remains preponderance of the evidence, and examiners "should only make a rejection when it is more likely than not (i.e., more than 50%) that the claim is ineligible." The memo states that "a rejection of a claim should not be made simply because an examiner is uncertain as to the claim's eligibility."

Takeaways

The memo reiterates existing USPTO guidance, in the hope of ensuring a consistent approach to assessing subject matter eligibility under 35 U.S.C. § 101 across art units. Whether it does that remains to be seen, but the memo may at least provide practitioners with clarity as to how the law should be applied by Examiners, particularly in relation to AI-related inventions.

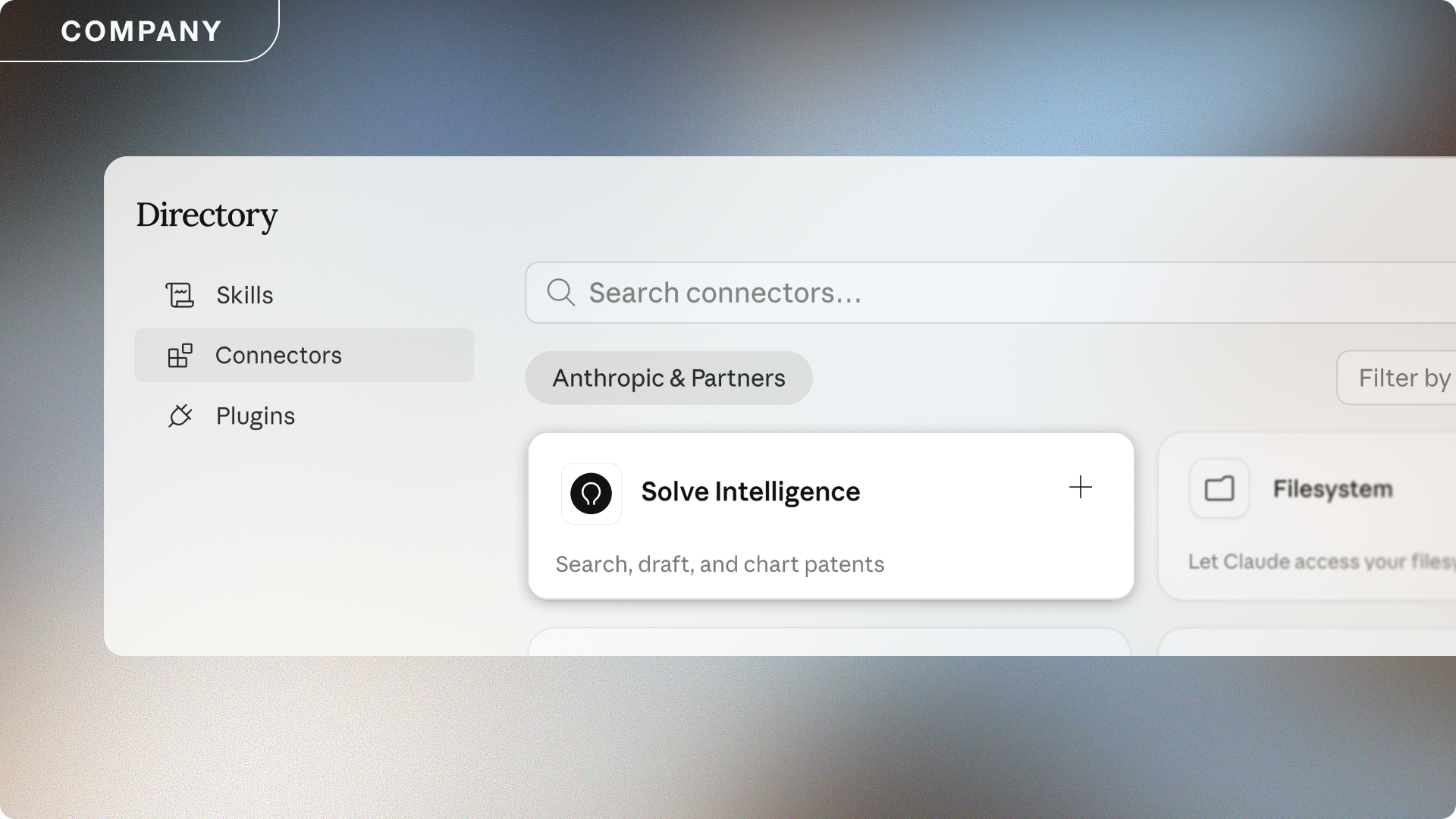

At Solve Intelligence, we track developments in patent law to build better AI-powered tools for patent prosecution. Our Patent Prosecution Copilot™ can help practitioners navigate complex eligibility issues when responding to rejections under 35 U.S.C. § 101.

AI for patents.

Be 50%+ more productive. Join thousands of legal professionals around the World using Solve’s Patent Copilot™ for drafting, prosecution, invention harvesting, and more.