How to Talk to Clients About Using AI in Patent Drafting

Artificial intelligence is no longer a theoretical issue in patent drafting, and many firms are already using AI-assisted workflows in some form. The harder question now isn’t whether to use AI, but how to talk to clients about using it.

Key insights

- Focus on better drafting quality, enforceability, and fewer avoidable downstream problems.

- Walk through data handling so confidentiality and retention protections are easy to trust.

- Explain inventorship stays human and the attorney remains responsible for every word.

- Keep the process clear and documented so expectations stay aligned from day one.

For some clients, AI usage signals efficiency and modernisation. For others, it raises immediate concerns about confidentiality, inventorship, and quality control. Those concerns are legitimate, so the key is to approach conversations about AI in a way that is structured, transparent, and grounded in professional responsibility.

In practice, the most effective discussions with clients will focus on outcomes rather than technology.

This article provides a structured framework to use when talking to clients about using AI, such as Solve Intelligence’s Patent Drafting CopilotTM, in patent drafting.

1. Lead with quality gains and risk management

Clients are not buying “AI”. They are buying drafting quality, long-term patent enforceability, and reduced invalidity risk.

Used properly, AI-supported drafting and review can improve structural discipline in a way that is difficult to achieve consistently through manual workflows alone. Importantly, this is not just about spotting issues at the end of the process; AI can also help prevent them from arising in the first place.

For example, AI tools can:

- Maintain consistent terminology across the specification

- Provide support for potential future amendments

- Avoid unnecessary limiting language that could affect scope

- Surface potential clarity issues or amendment vulnerabilities before filing

In patent practice, many downstream problems arise not from flawed legal strategy, but from avoidable internal inconsistencies introduced during drafting. These issues may only become visible years later, perhaps during opposition proceedings or litigation, by which time amendment flexibility is limited.

When AI is embedded within the drafting workflow itself, it functions as a form of structured guardrail. It not only supports the attorney while the document is being built, but also audits it before filing.

How Solve achieves quality control

At Solve Intelligence, we see AI not as a drafting replacement but as the provision of structured attorney support and a consistent review layer. Applications drafted with the support of Solve’s AI benefit from real-time structural assistance, while drafts prepared outside the platform can still be analysed for terminology alignment, consistency, and support.

In both cases, the attorney retains full control. The system surfaces risks and structural issues, but professional judgment determines the final text.

Framed this way, the conversation shifts from “Are you letting AI write patents?” to “How are you reducing avoidable drafting risk?”

2. Address confidentiality and security without hesitation

For most sophisticated clients, the primary concern is data security.

They want to know where their invention disclosure goes, if and where it is stored, whether it is used to train external models, and what contractual safeguards exist.

It is crucial to distinguish between consumer AI tools and enterprise-grade legal AI systems.

How Solve handles security

At Solve Intelligence, we adhere to the highest security standards. We adhere to the highest security standards, including ISO 42001, and are SOC 2 certified, with formalised policies around data handling, access controls, and auditability. We also comply with ISO 42001 for AI management systems, as well as GDPR and CCPA.

Additionally, we implement Zero Data Retention (ZDR) policies with our model providers, meaning client data processed through Solve's platform is not retained or used to train any of the underlying models.

From a client perspective:

- Data is handled within a controlled, secure environment

- It is not used for model training

- Access is restricted and governed

- Professional responsibility remains with the attorney

If you are using AI in your workflow, you should be able to clearly and confidently explain how client data is treated and what safeguards are in place.

Open conversations with clients build trust far more effectively than avoidance.

3. Clarify inventorship and professional accountability

Inventorship concerns occasionally arise from a broader narrative that AI “creates” content.

From a patent law perspective, the position is clear. Inventors must be natural persons and so inventorship relies on human conception of an inventive concept. AI-assisted drafting does not alter that analysis.

AI systems can assist with structuring text, refining language, and identifying inconsistencies but they do not conceive inventions. The inventive contribution remains entirely human.

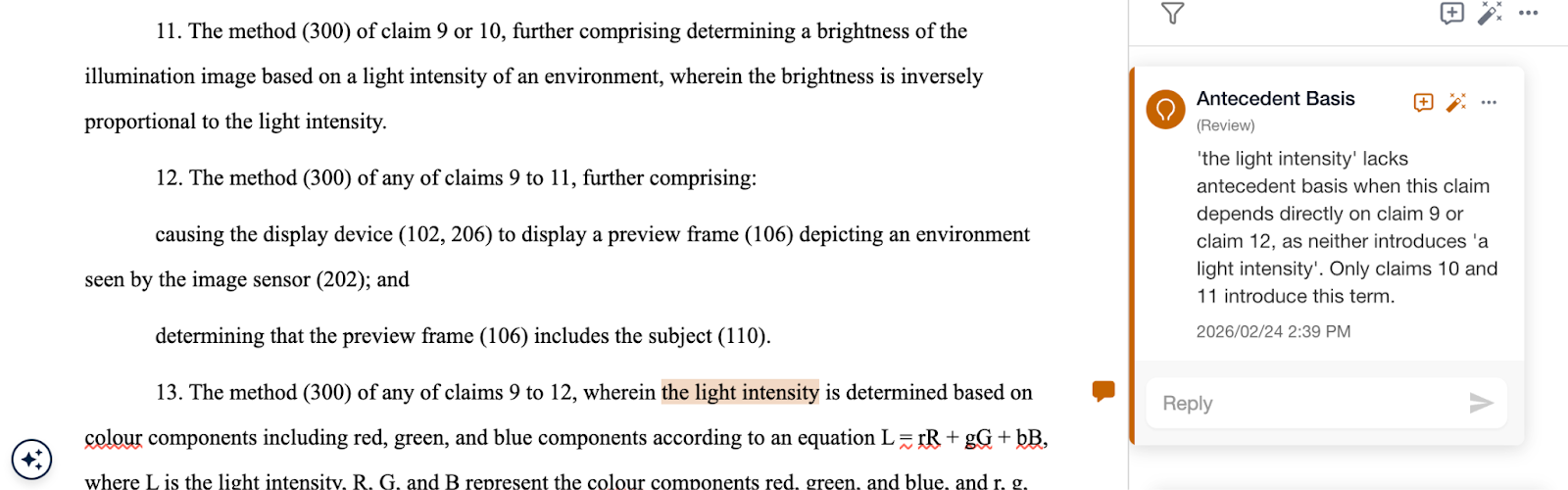

A more nuanced concern relates to quality. Clients may worry about hallucinated features, formulaic drafting, or over-reliance on automated suggestions.

To mitigate concerns, a responsible AI-assisted drafting process should include:

- Mandatory human review of all AI-generated or suggested content

- Clear separation between AI suggestions and final attorney-approved text

- Internal training on appropriate AI use and limitations

- Explicit retention of supervisory responsibility by the signing attorney

How Solve preserves inventorship and keeps attorneys in control

At Solve Intelligence, our tools are designed around this principle. Our platform will surface potential issues, but it will not autonomously amend the draft. Instead, the attorney must evaluate flagged issues and decide how best to respond.

The safeguard is not the absence of AI; it is structured human oversight

In practice: make it clear, controlled, and documented

Rather than asking clients whether they are “comfortable with AI”, it is more effective to explain precisely how it is used in your workflow and invite questions.

Be explicit about these three things:

- What the AI actually does: for example, structured drafting support, terminology alignment, and consistency or support checks.

- How client data is protected: know and be able to explain your AI providers’ security standards and date retention policies.

- Who retains responsibility: make it clear that a qualified patent attorney remains professionally accountable.

Be ready to answer client concerns. These are often centred on:

- Inventorship: Offer assurance that AI does not conceive the invention and therefore does not affect inventorship.

- Confidentiality: Emphasize that in a properly configured enterprise environment, client data is not used to train models and is handled within a secure, access-controlled framework.

- Quality and over-reliance: Explain that AI operates within a human-supervised workflow and does not autonomously “write” patents.

Whatever the agreed position, record it. A short, documented understanding reflects procedural maturity and avoids ambiguity later.

Handled this way, the discussion becomes straightforward. The focus shifts from whether AI is being used to how drafting quality and risk control are being strengthened.

Final Thoughts: Responsible adoption is a professional obligation

The real issue isn’t whether AI should be used in patent drafting, but whether it’s used responsibly.

When deployed thoughtfully, AI can strengthen structural consistency, reduce avoidable drafting risk, and enhance long-term enforceability.

By providing your clients with clear explanations, robust safeguards, and human accountability, AI can reinforce professional standards.

FAQs

1. Does using AI in patent drafting affect inventorship?

No. To highlight a few jurisdictions, under US, European and UK patent law, inventors must be natural persons. Inventorship depends on who contributed to the inventive concept, not who assisted with drafting.

AI tools can help structure text, flag inconsistencies, or suggest improvements, but they do not conceive inventions. The inventive contribution remains entirely human. Thus, using AI as a drafting support tool does not alter the legal analysis of inventorship.

2. Is client data used to train AI models?

That depends entirely on the system being used.

Consumer AI tools may retain or use data for training. In contrast, enterprise-grade legal AI platforms should operate under strict data handling frameworks, including zero data retention arrangements with model providers.

When assessing any AI provider, firms should be able to answer clearly:

- Where is the data stored?

- Is it retained?

- Is it used for training?

- What contractual and technical safeguards are in place?

If those answers are unclear, the system is not appropriate for confidential patent drafting work.

3. Will AI reduce the quality of patent applications?

Used improperly, it could. Used responsibly, it should improve structural quality and reduce avoidable drafting errors.

AI is most effective when embedded as a structured review layer within an attorney-led workflow. It can help:

- Maintain consistent terminology

- Surface clarity risks

- Reduce inadvertently limiting language

However, quality ultimately depends on human supervision. AI should function as a guardrail, not a replacement for professional judgment.

4. Should firms disclose AI use to clients?

There is no universal rule requiring proactive disclosure in every jurisdiction. That said, transparency builds trust.

The better question is not whether AI is being used, but how it is being used. If AI forms part of a structured, secure, attorney-supervised workflow designed to improve quality and reduce risk, explaining its role can reassure clients.

Where clients have outside counsel guidelines addressing AI, firms should of course comply with those requirements.

5. Could AI-generated errors create liability risks?

The risk does not arise from AI itself. It arises from inadequate supervision.

Professional responsibility remains with the signing attorney; “the AI suggested it” is unlikely to be an acceptable defence for drafting deficiencies.

For that reason, responsible AI use requires:

- Clear human review of all AI outputs

- Training on system limitations

- Defined internal policies

- Documented supervisory control

When these safeguards are in place, AI can reduce overall drafting risk rather than increase it.

AI for patents.

Be 50%+ more productive. Join thousands of legal professionals around the World using Solve’s Patent Copilot™ for drafting, prosecution, invention harvesting, and more.